What This Looks Like in Practice

Paid Search Audit Tool

The following examples show how Steps 4, 5, and 6 connect. A plain-language SOP for keyword review becomes a structured set of IF/THEN directives, which a coding agent then uses to produce a prioritized audit output. The channel is paid search, but the architecture is the same for any channel.

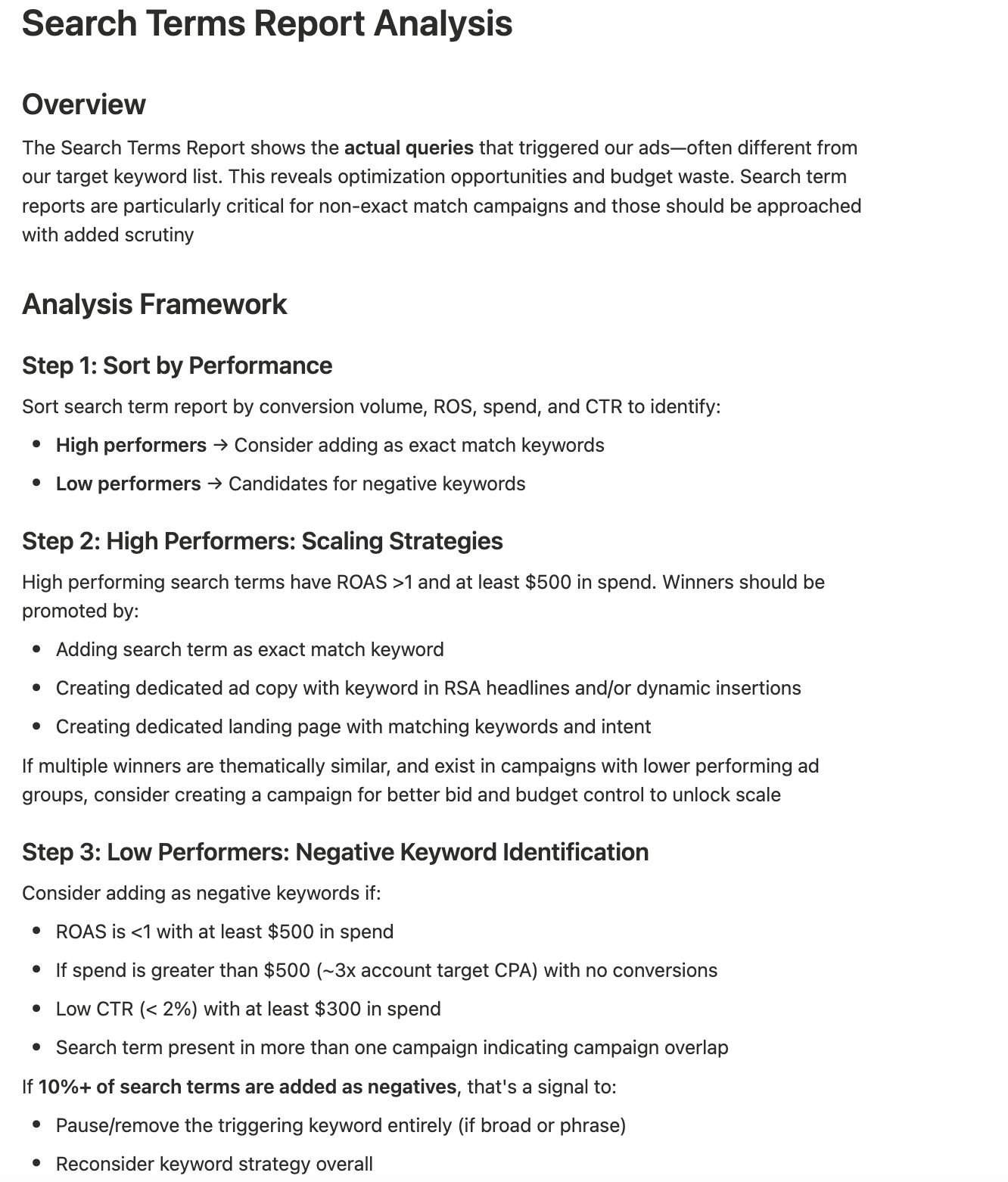

The SOP

Hard-won rules encoded in plain language.

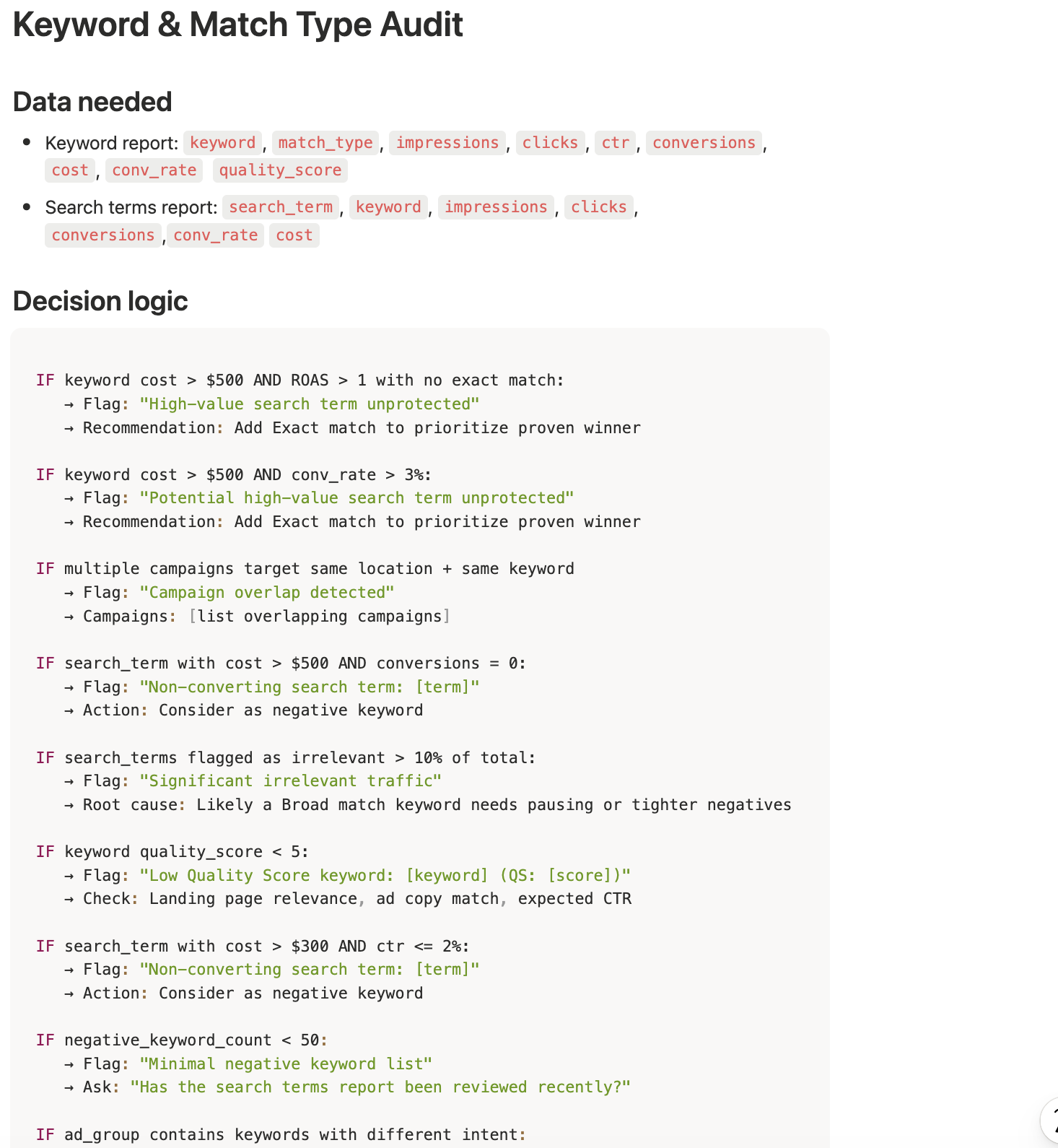

The Directives

Directives are your SOPs turned into a precise framework for the model to evaluate data it receives.

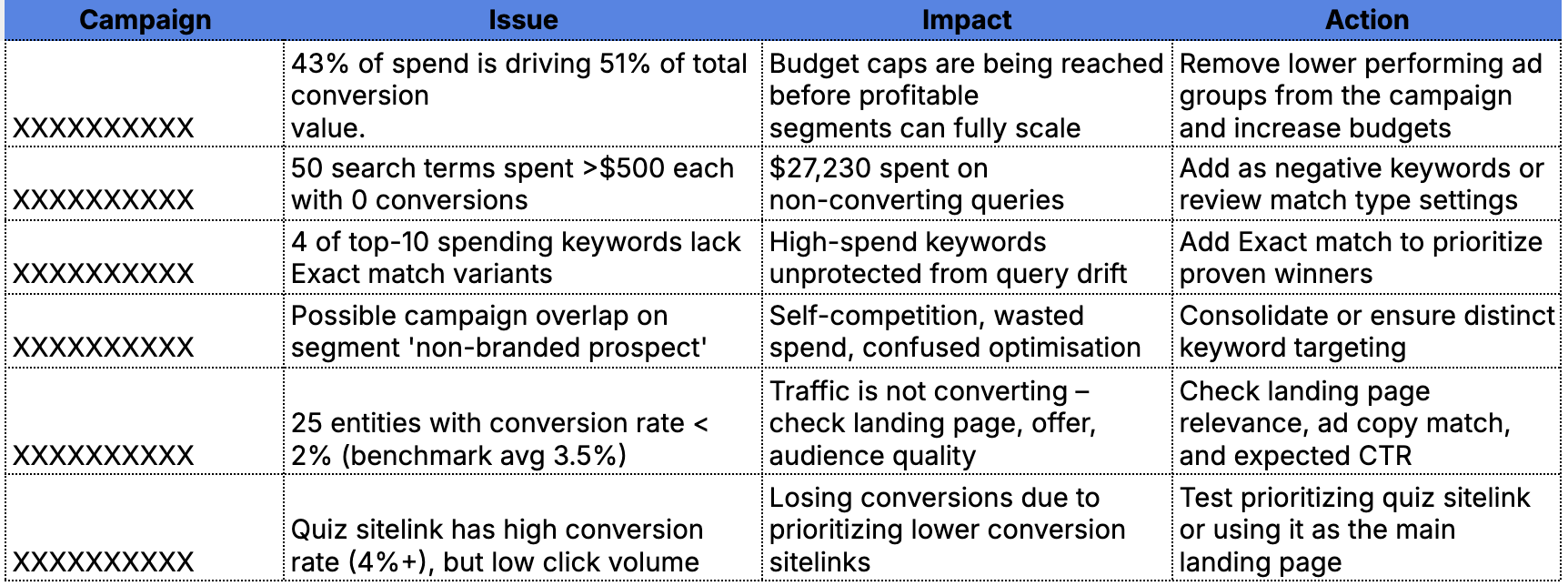

The Audit

What the tool produces: a prioritized table of issues, each with a clear impact and specific next action. No interpretation required: the model has already done it.